Turn your website into a 24/7 AI sales agent that qualifies visitors, captures leads, and books meetings — built for HubSpot-first teams.

Dynamiq

Build, deploy, and monitor enterprise-grade AI agents and agentic workflows — all in your own infrastructure.

How Dynamiq Works: From Prototype to Production AI

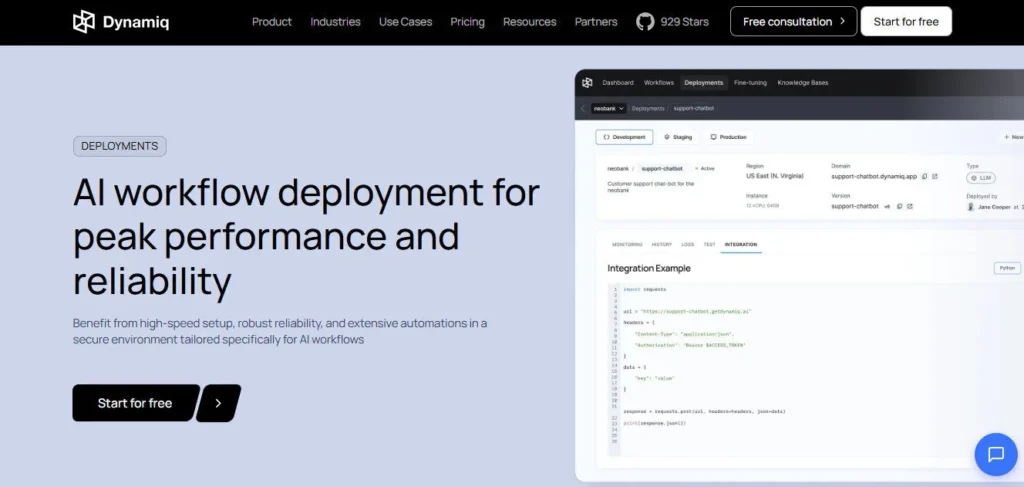

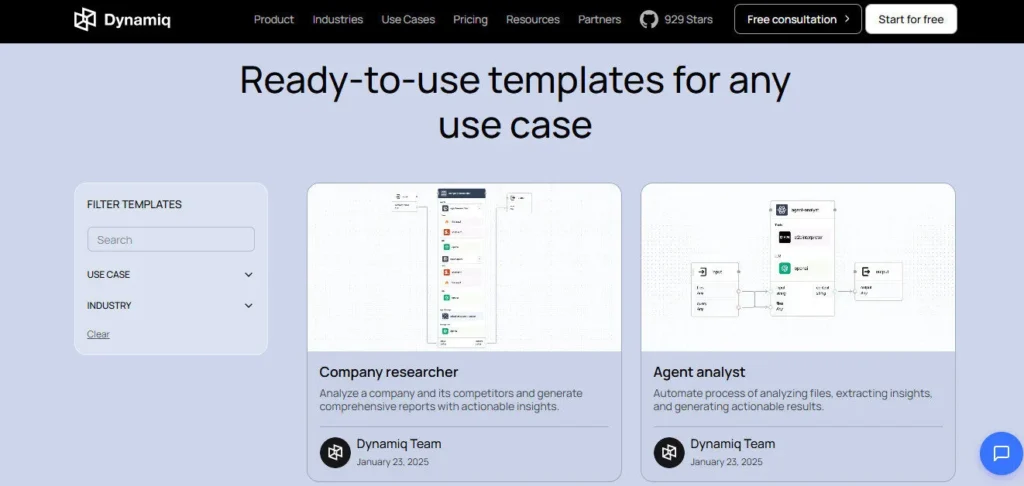

Dynamiq is an enterprise-grade LLMOps platform founded in 2024 by Vitalii Duk — a former engineering leader at Careem (acquired by Uber) who spent over a decade building MLOps infrastructure at scale. The platform compresses a typical 6-month AI deployment cycle down to hours by giving technical teams a single workspace to prototype, test, deploy, monitor, and fine-tune AI agents and GenAI workflows.

It's built specifically for organizations that need full control over their data — particularly in regulated industries like finance, healthcare, and the public sector — with on-premise, hybrid, and VPC deployment options that keep sensitive data off shared cloud infrastructure.

Key Capabilities

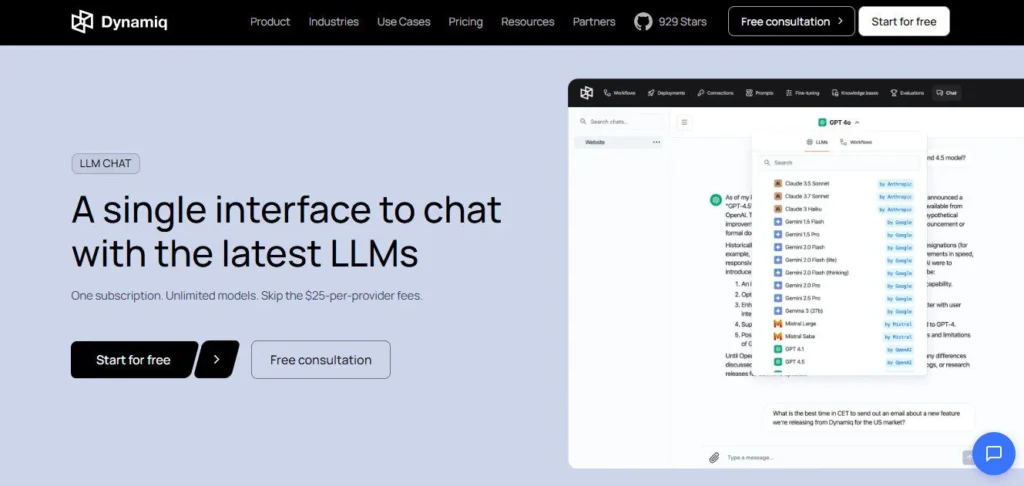

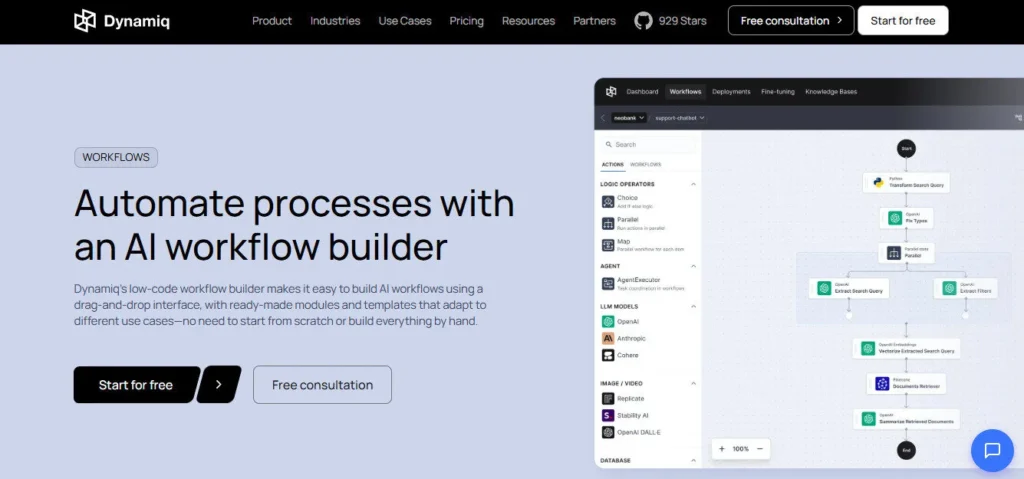

At the core of Dynamiq is its low-code drag-and-drop workflow canvas, where you visually compose LLM nodes, RAG retrievals, conditional logic, Python code blocks, and tool integrations into multi-step agentic pipelines. The platform supports every major LLM — OpenAI, Anthropic Claude, Google Gemini, Meta Llama 2, Hugging Face models, and Replicate — so you're never locked into a single model provider.

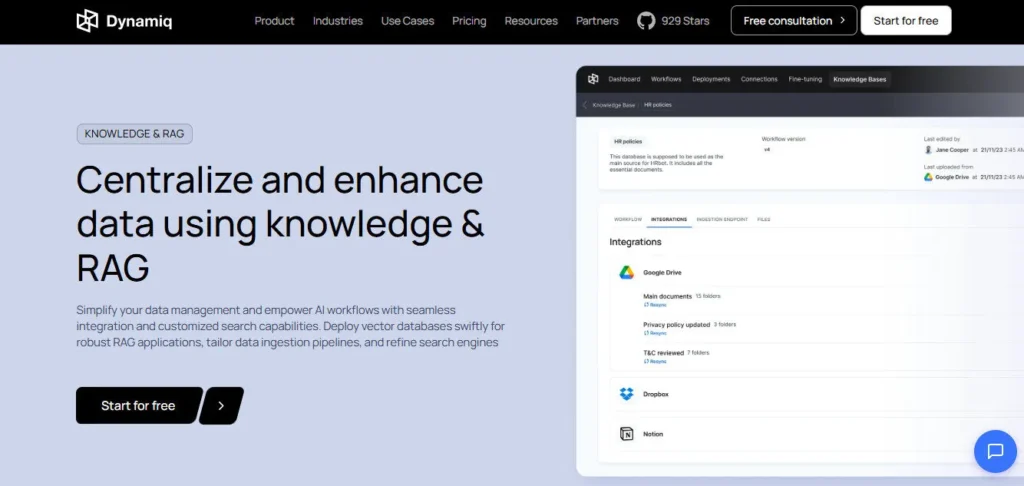

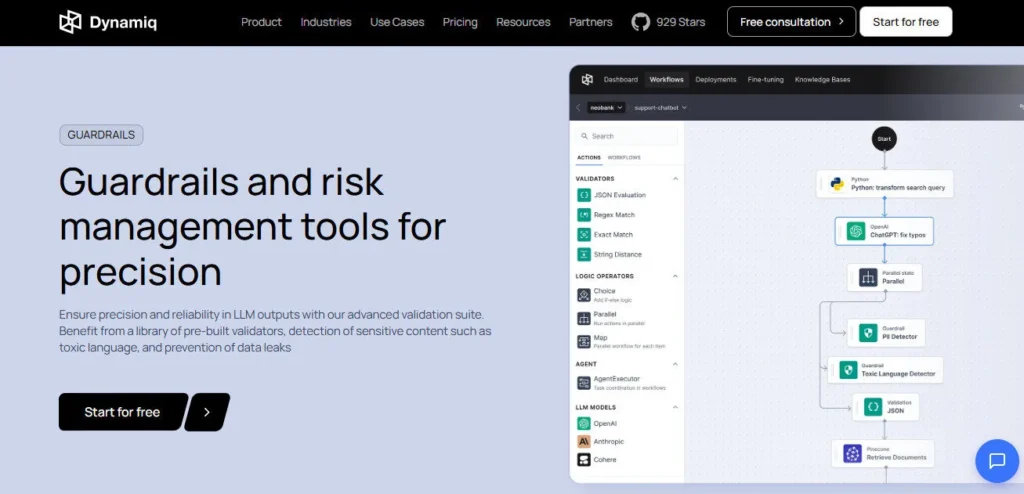

RAG knowledge bases ingest PDFs, documents, and data sources and connect to vector databases in minutes, giving your agents accurate retrieval over proprietary company data. Guardrails add a validation layer on top of every LLM output, catching hallucinations, sensitive data leaks, and format violations before responses reach users.

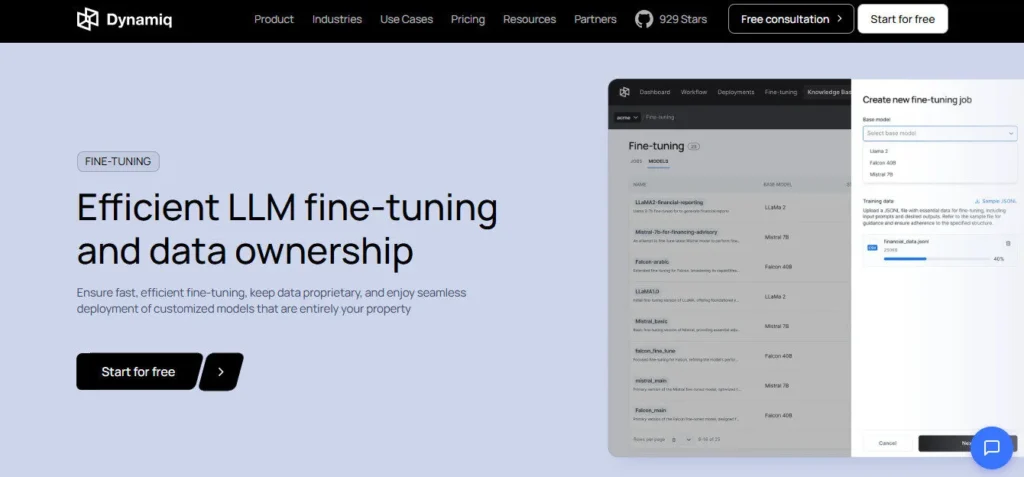

Two-click LLM fine-tuning lets enterprise teams train open-source models on their own datasets directly within the platform, then deploy those fine-tuned models as owned assets — not rented API calls.

Who Gets the Most Out of It

Enterprise AI and engineering teams get the most immediate value — Dynamiq eliminates the need to hire a dedicated MLOps team (the company claims savings of up to $600k annually) by handling infrastructure, orchestration, and observability in one place.

Finance and healthcare teams benefit most from on-premise deployment and HIPAA/SOC 2/GDPR compliance, which let them run AI agents over sensitive data without regulatory exposure.

Product managers and AI architects with some technical background can use the low-code canvas to design and test full agentic workflows without writing infrastructure code, while data engineers can drop into Python nodes for custom logic wherever needed.

Is It Worth It?

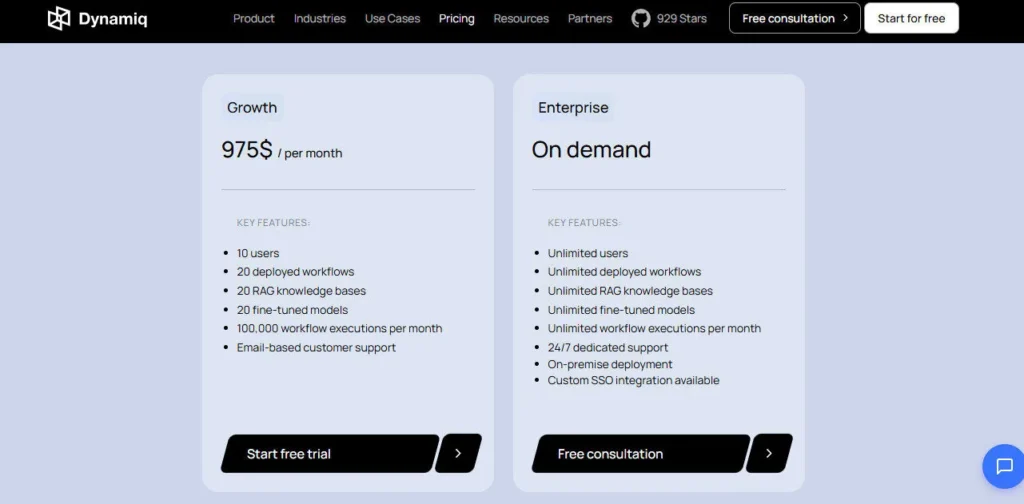

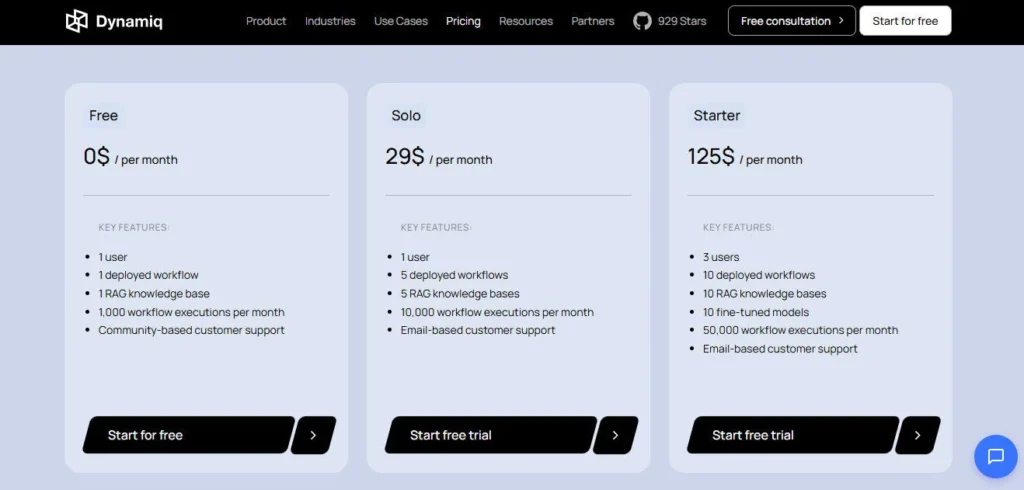

The free plan at $0/month with 1 deployed workflow and 1,000 executions is functional enough to evaluate the platform seriously. The Solo plan at $29/month is aimed at individual developers, but the real jump in value — fine-tuning, 20 RAG knowledge bases, 10 users — requires the Growth plan at $975/month, which is a significant step up suited to teams rather than individuals.

For enterprises with stringent compliance requirements and the need to own their AI infrastructure, the ROI case is straightforward: Dynamiq reduces development time from months to hours and eliminates the cost of a dedicated ML infrastructure team.

Forbes coverage and a pre-seed funding round in 2024 validate early enterprise traction, though the platform is still young compared to more established LLMOps competitors.

Dynamiq is an enterprise LLMOps platform founded in 2024 by Vitalii Duk and headquartered in San Francisco, California. It enables engineering and AI teams to build, deploy, monitor, and fine-tune AI agents and agentic workflows using a low-code visual interface, with support for on-premise, hybrid, and cloud deployments. The platform is SOC 2, GDPR, and HIPAA compliant — designed specifically for regulated industries that need full data ownership and governance.

• Low-Code Workflow Builder — drag-and-drop canvas to visually compose multi-step agentic pipelines with LLM nodes, conditional logic, Python code blocks, RAG retrievals, and tool integrations; no deep ML engineering required.

• Multi-Model LLM Support — integrates natively with OpenAI, Anthropic Claude, Google Gemini, Meta Llama 2, Hugging Face, and Replicate; switch or combine models within a single workflow without rewriting logic.

• RAG Knowledge Bases — ingest PDFs, documents, and company data sources into vector databases in minutes; agents retrieve and cite your proprietary data rather than relying on generic LLM knowledge.

• Two-Click LLM Fine-Tuning — fine-tune open-source LLMs on your own datasets directly within the platform; models you build become your owned assets, not rented API endpoints.

• Guardrails & Output Validation — applies pre-built and custom validators to every LLM response to detect hallucinations, sensitive data exposure, PII leaks, and format violations before output reaches users.

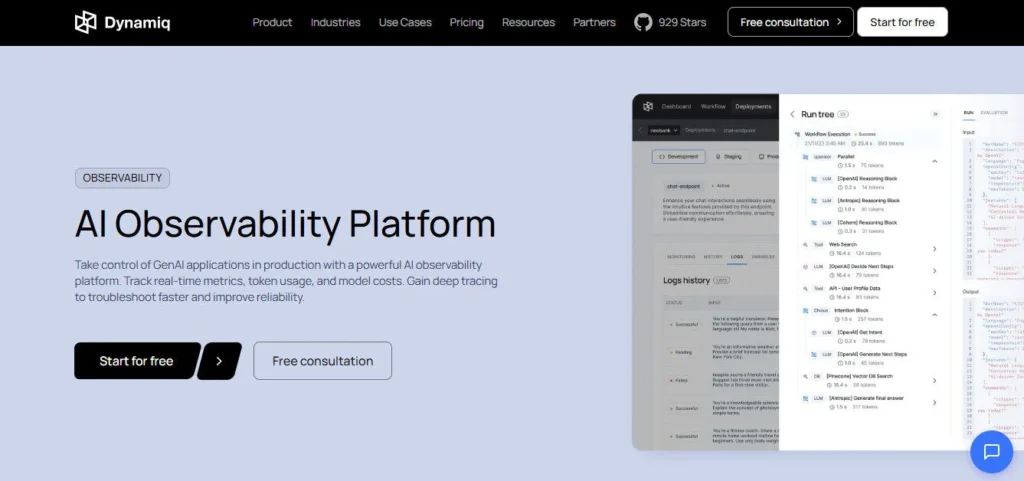

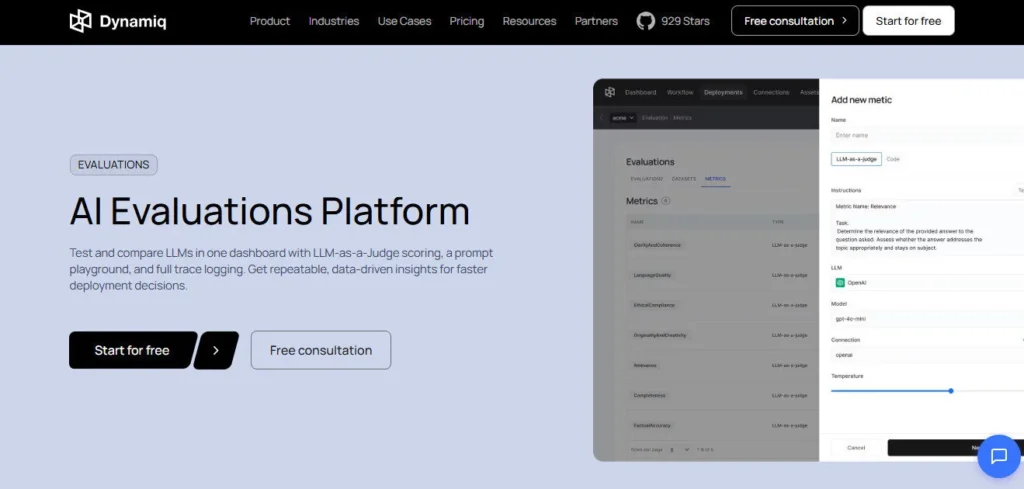

• Observability & Evaluations — logs all agent interactions, tracks key performance metrics, runs large-scale LLM quality evaluations, and provides real-time debugging views so engineering teams can monitor production behavior precisely.

• On-Premise & VPC Deployment — runs entirely within your own corporate infrastructure, VPC, AWS, IBM Cloud, or IBM watsonx; satisfies strict regulatory requirements in finance, healthcare, and the public sector.

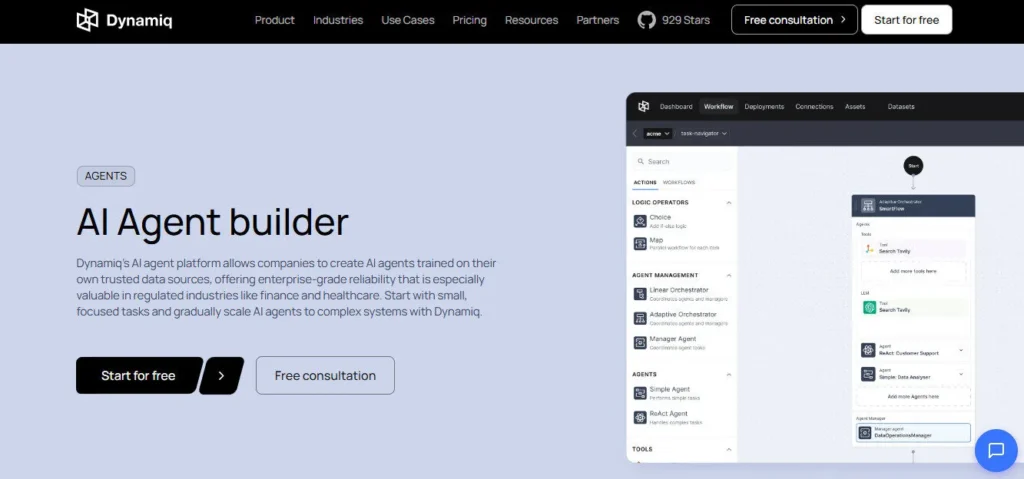

• Multi-Agent Orchestration — design and deploy networks of specialized LLM agents that collaborate on complex tasks, share context, and connect to internal APIs — all managed from a single visual interface.

- ✔On-premise and VPC deployment satisfies HIPAA, SOC 2, and GDPR compliance out of the box — rare at this price point

- ✔Supports OpenAI, Anthropic, Gemini, Llama 2, Hugging Face, and Replicate in one platform — no single-vendor lock-in

- ✔Two-click LLM fine-tuning lets teams own their models rather than paying per-token rental fees indefinitely

- ✔Low-code canvas reduces a 6-month AI development cycle to hours according to company-published benchmarks

- ✔Built-in guardrails prevent PII leaks, hallucinations, and format violations before output ever reaches end users

- ✔Free plan includes 1 deployed workflow and 1,000 executions per month — enough to meaningfully evaluate the platform

- ✔Forbes-covered and pre-seed funded in 2024, signaling early enterprise validation from credible third parties

- ×Growth plan jumps to $975/month — a steep step up from Solo at $29/month with no mid-tier option in between

- ×Fine-tuning and multi-user collaboration are locked behind the $975/month Growth plan, excluding solo developers and small teams

- ×The platform was founded in 2024 and is still early-stage — long-term reliability and roadmap maturity remain unproven vs. established LLMOps competitors

- ×Enterprise pricing is not publicly listed, requiring a sales call before you can evaluate total cost of ownership

- ×Community and email-only support on Free and Solo plans; dedicated support requires enterprise engagement

- ×With 11–50 employees, the team is small relative to the complexity of enterprise deployments it targets

Dynamiq is built for technical teams at mid-size to large organizations that need to deploy reliable, compliant AI agents without assembling a dedicated ML infrastructure team from scratch.

• Enterprise AI and engineering teams — need a single platform to prototype, deploy, monitor, and fine-tune agentic workflows without managing separate MLOps, vector DB, and observability tools.

• Finance, healthcare, and public sector organizations — require on-premise or VPC deployment to satisfy SOC 2, GDPR, and HIPAA requirements before any AI touches sensitive customer or patient data.

• AI architects and product managers with technical backgrounds — use the low-code canvas to design full agentic pipelines and test LLM outputs without writing infrastructure code, while data engineers extend logic using Python nodes.

• Enterprises exploring LLM ownership — want to fine-tune and own open-source LLMs on proprietary data rather than paying indefinite per-token fees to third-party API providers.

Dynamiq stands apart from generic no-code AI builders through its enterprise-first architecture — combining on-premise deployment, LLM ownership via fine-tuning, and a full observability stack in a single low-code platform.

• On-Premise and VPC Deployment as a Standard Feature — most LLMOps and AI agent platforms are cloud-only; Dynamiq supports deployment inside your own VPC, AWS environment, IBM Cloud, or IBM watsonx, making it one of the few platforms where regulated-industry teams can run AI agents without sending data off-premises.

• Two-Click LLM Fine-Tuning with Ownership — rather than just calling external LLM APIs, Dynamiq lets you fine-tune open-source models on your own data directly in the platform and retain them as owned assets; this transitions teams from paying per-token rental fees to building proprietary AI models.

• Full LLMOps Lifecycle in One Platform — most tools specialize in either building (workflow canvas), deploying (serving infrastructure), or monitoring (observability); Dynamiq handles prototype, test, deploy, observe, and fine-tune in a single workspace, eliminating the 4–6 tool stack most enterprise AI teams currently manage.

• Guaranteed Structured Output — LLMs are forced to follow a set output format (JSON, YAML, etc.) at the platform level rather than relying on prompt engineering alone; this is critical for enterprise workflows where downstream systems depend on predictable, parseable AI responses.

Dynamiq integrates with major LLM providers, cloud infrastructure environments, and enterprise data systems for end-to-end agentic AI deployment.

• LLM Providers — connects natively to OpenAI (GPT-4o, GPT-4), Anthropic (Claude 3.5), Google Gemini, Meta Llama 2, Hugging Face models, and Replicate; multiple models can be combined within a single workflow.

• Cloud & Infrastructure Deployment — supports cloud-native SaaS, on-premise VPC, AWS, IBM Cloud, and IBM watsonx catalog deployment; Dedicated Infrastructure mode keeps all fine-tuning and model serving within your own environment.

• Vector Databases & RAG Data Sources — ingests PDFs, documents, and structured data into built-in vector storage; supports integration with external vector DBs for teams with existing data infrastructure.

• Internal APIs & Enterprise Systems — AI Actions and Python code nodes connect agents to any internal REST API, database, or third-party service; the AgentOps layer manages API connections and tool calls for multi-agent orchestration at scale.

Make

Automate Your Workflows Visually, Simply, & Intelligently.

Relevance AI

Build and deploy autonomous AI agent workforces for sales, marketing, and operations — no code required.

Pagergpt AI

Build and deploy intelligent AI agents trained on your data — no code, no friction.

Dynamiq is one of the most technically complete LLMOps platforms available in 2026 for enterprises that need to build, own, and control their AI agent infrastructure — especially in regulated industries where cloud-only tools are not an option.

The free and Solo plans make it easy to evaluate, but the real value and depth sit behind the $975/month Growth plan and above, meaning Dynamiq is not the right fit for startups or solo developers on tight budgets.

For mid-size to enterprise engineering teams ready to invest in a full AI agent stack with compliance and observability built in from day one, Dynamiq delivers a compelling and differentiated platform.

Authority Hub

Check complete Dynamiq features

Alternatives

Best Dynamiq alternatives in 2026

Comparison

Compare Dynamiq vs competitors

Best Tools

Best AI tools in AI Agents

Top Tools

Top AI Agents AI tools ranked

Tutorial

Watch Dynamiq Step-by-Step Tutorial

AI Tools Directory

Discover 289 AI tools list

Submit Tool

Add your AI tool here for free

AI Tool Coupons

Unlock exclusive deals & discounts

Did you find this content helpful?

Promote This Tool

Help others discover this tool by sharing this page.

Dynamiq Reviews

Write a Review

No reviews yet. Be the first to share your thoughts!

33 Similar Dynamiq Tools

Build reliable, no-code AI Agents grounded in your company's data — for customer support, sales, and beyond.

Train an AI on your content and let it handle customer support 24/7—no code required.

Build and deploy autonomous AI agent workforces for sales, marketing, and operations — no code required.

Build and deploy intelligent AI agents trained on your data — no code, no friction.

Turn text, scripts, and blog posts into viral-ready videos in minutes — no editing skills needed.

Your content creation and scheduling solution.

The all-in-one AI assistant for podcast growth.

Effortlessly create studio-quality voiceovers from text.

Turn static images into engaging videos with AI.

Automate video editing for social media success.

Chat with any PDF Get instant answers, summaries, & insights.

The safest LinkedIn and email outreach platform using mobile app APIs — AI-personalized voice notes, video messages, and an Appointment Setter Agent that books meetings while you sleep.

The AI sales engagement platform that unites email, parallel dialing, LinkedIn, SMS, and WhatsApp in one platform — helping 5,000+ sales teams execute 350 calls per hour and build predictable pipeline.

Design, build, and launch AI Agents collaboratively.

Automate sales & support with human-like voice bots.

No-code AI voice agents: automate calls, enhance customer experience.

Build custom, real-time AI voice agents for calls.

Live chat and AI chatbots integrated with your team tools.

AI-powered help desk: smart tickets, omnichannel, rapid resolution.

Unify customer communication & boost engagement with Crisp's AI-powered platform.

Boost customer satisfaction & agent efficiency with AI-powered live chat.

All-in-one AI-powered help desk — live chat, ticketing, call center, and social media in a single inbox.

Your Multichannel AI Assistant for Customer Engagement.

Supercharging Your Meta Engagement with AI Chatbots.

Your Data-Powered AI Chatbot Solution.

Craft a custom AI chatbot with GPT power – no coding needed.

Build a custom AI agent trained on your business content in 15 minutes — no coding, no hallucinations, 1,400+ file formats supported.

Transforming 3D Design with AI-Powered Modeling & Texturing

Revolutionizing Audio Content with AI-Driven Voice Synthesis.

Transforming Project Management with AI-Powered Collaboration.

Open-Source Cloud for AI Revolution.

Fuel Your Entrepreneurial Vision with AI-Driven Innovation.